Luckily I have not been the victim of a slowloris attack but that is no reason not to take action. So when I was setting up a webserver solution based on Apache, MySQL and PHP5 I might as well add a caching frontend for Apache. This howto guide assumes you have basic knowledge of FreeBSD and Apache already installed.

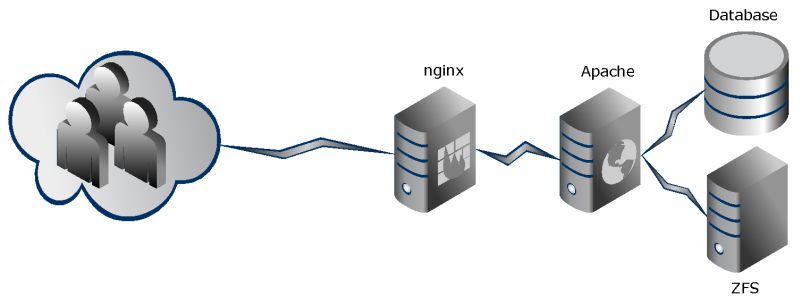

The idea is to let Apache carry the heavy lifting on the backend and let nginx serve the files to the visitors. The frees up Apache to focus on generating the pages and data needed then send it to nginx and thus being ready for the next job. nginx will then just server the files to the visitor at the speed they have, using a lot less resources.

As you can see my skills at using Gliffy is very limited but you should get the picture (npi).

First we change Apache to suit our needs, because the requests come from a proxy and not the real visitors we need to install mod_rapf. This mod for apache make proxied requests appear with the original client IP, so we can still use the log from apache and sites can use IP information about the users. Once installed from ports you need to add two lines to to httpd.conf and uncomment the module line.

RPAFenable On RPAFproxy_ips 127.0.0.1

Now set Apache to listen only on localhost at another port than 80 in httpd.conf, my setup uses 8080, and remember to set your vhosts in Apache to 127.0.0.1:8080 also.

Listen 127.0.0.1:8080

and

NameVirtualHost 127.0.0.1:8080

That’s it for the Apache configuration, next up is nginx. When you install nginx make sure you get the rewrite module, the ssl module and cache module – if you want simple server statistics get the status module also. I created my own user for nginx and included it in the www group with Apache, I have no clear reason to do this, but it just seemed right. My nginx configuration is split in 3 parts – the main nginx configuration, the proxy/cache configuration and the vhosts.

First the nginx.conf

user nginx www;

worker_processes 2;

error_log /var/log/nginx/nginx-error.log;

#error_log logs/error.log notice;

#error_log logs/error.log info;

pid /var/run/nginx.pid;

events {

worker_connections 8192;

}

http {

include mime.types;

default_type application/octet-stream;

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/nginx-access.log main;

sendfile on;

#tcp_nopush on;

#keepalive_timeout 0;

keepalive_timeout 65;

proxy_cache_path /var/nginx/cache levels=1:2 keys_zone=my-cache:8m max_size=1000m inactive=600m;

proxy_temp_path /var/nginx/cache/tmp;

proxy_cache_key "$scheme$host$request_uri";

gzip on;

server {

#listen your-external-ip:80;

server_name _;

#access_log /var/log/host.access.log main;

location /nginx_status {

stub_status on;

access_log off;

}

# redirect server error pages to the static page /50x.html

#

#error_page 404 /404.html;

error_page 500 502 503 504 /50x.html;

location = /50x.html {

root /usr/local/www/nginx-dist;

}

location / {

proxy_pass http://127.0.0.1:8080;

include /usr/local/etc/nginx/proxy.conf;

}

}

include /usr/local/etc/nginx/vhosts/*;

}

Then proxy.conf

proxy_buffering on; proxy_redirect off; proxy_set_header Host $host; proxy_set_header X-Real-IP $remote_addr; proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for; client_max_body_size 10m; client_body_buffer_size 128k; proxy_connect_timeout 90; proxy_send_timeout 90; proxy_read_timeout 90; proxy_buffers 100 8k;

Now the vhost

server {

listen your-external-ip:80;

server_name your-hostname;

error_log /var/log/nginx/your-hostname-error.log;

access_log /var/log/nginx/your-hostname-access.log main;

location / {

proxy_pass http://127.0.0.1:8080;

include /usr/local/etc/nginx/proxy.conf;

}

}

That’s it, you are now running a caching nginx frontend with Apache backend.

A few notes – in the eginx.conf the ‘server_name _;’ is a catch all, so any traffic not covered by vhosts goes here, good for debugging if you have made mistakes.

#1 by adi on 31. March, 2011 - 10:07

Quote

im a litle bit curious bout this nginx cache. does it cache file for site which has dynamic content (e.g youtube) ???

#2 by Miklos on 31. March, 2011 - 10:11

Quote

No the setup here caches local files – Youtube embeds would not be cache as only the client would see the actual data from Youtube 🙂

Pingback: FreeBSD + Apache + nginx proxy + vhosts | Nginx Lighttpd Tutorial

Trackback: How do I became an angel investor?

Trackback: ppfinsurance.ru`s latest blog post